The cerebellum coordinates eye and hand tracking movements

In the article and abundant reference material below you will learn why Mendability exercises such as “Memory of weight and color” or “Maze in front of mirror” to stimulate brain plasticity to improve brain function and eventually lead to growth and health, including in the cerebellum.

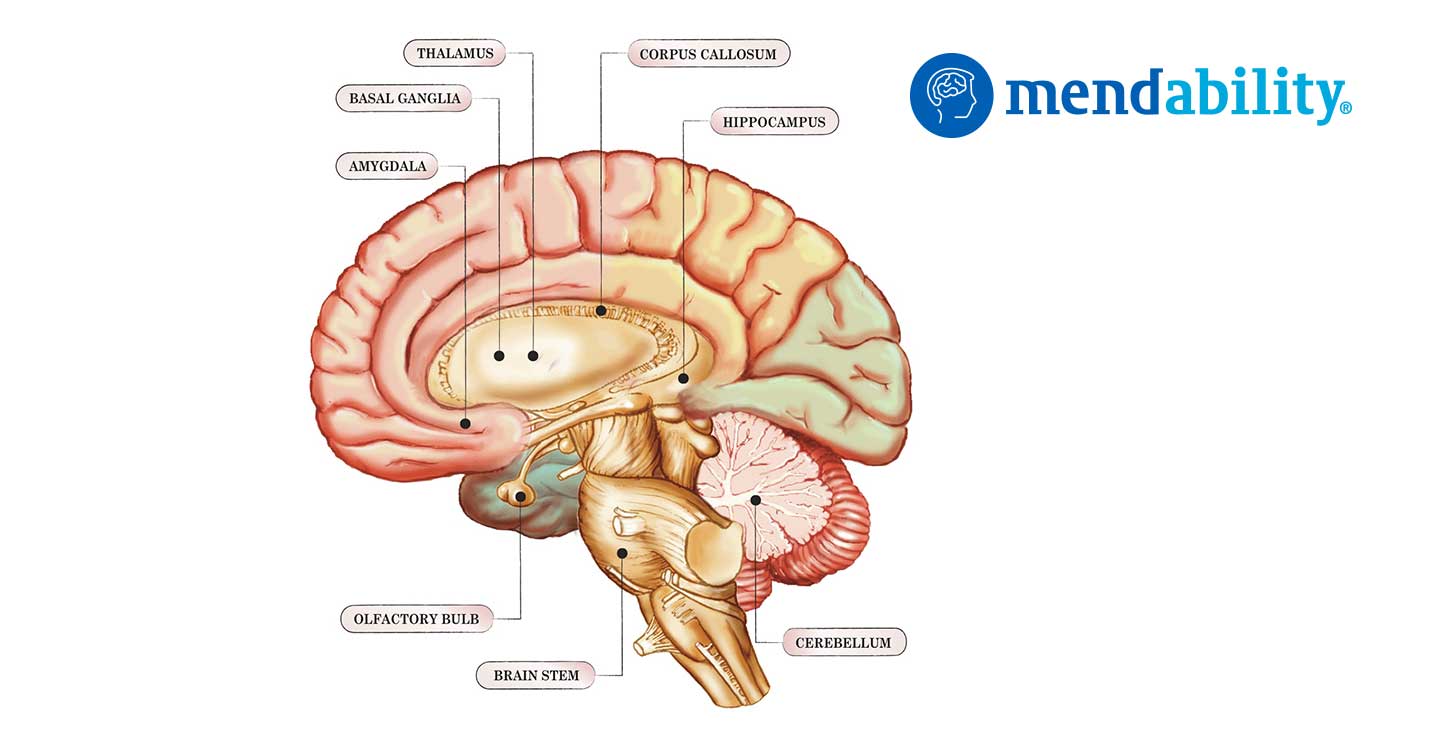

The cerebellum (Latin for little brain) is a region of the brain that plays an important role in motor control. It is also involved in some cognitive functions, such as attention and language, and in some emotional functions, such as regulating fear and pleasure responses.

Note: The text below does not include the tables from the original article. To read the article with its data you may wish to contact the authors directly.

Nature Publishing Group http://neurosci.nature.com

The cerebellum coordinates eye and hand tracking movements

R. C. Miall, G. Z. Reckess and H. Imamizu, 638 nature neuroscience • volume 4 no 6 • June 2001

Introduction

The cerebellum is thought to help coordinate movement. We tested this using functional magnetic resonance imaging (fMRI) of the human brain during visually guided tracking tasks requiring varying degrees of eye–hand coordination. The cerebellum was more active during independent rather than coordinated eye and hand tracking. However, in three further tasks, we also found parametric increases in cerebellar blood oxygenation signal (BOLD) as eye–hand coordination increased. Thus, the cerebellar BOLD signal has a non-monotonic relationship to tracking performance, with high activity during both coordinated and independent conditions.

These data provide the most direct evidence from functional imaging that the cerebellum supports motor coordination. Its activity is consistent with roles in coordinating and learning to coordinate eye and hand movement. synchronous (‘coordinated condition’) than when they were unrelated (‘independent condition’) with 20% reduction in root mean squared (RMS) error and 37% reduction in tracking lag (p < 0.001, matched pairs t-tests).

Performance when tracking with the hand alone (‘hand only’) was worse than in the coordinated eye and hand condition (17% increase in lag, p = 0.03; 3% increase in RMS error, not significant), but better than in the independent condition (RMS error and lag, p < 0.001). These differences were confirmed in laboratory conditions in an independent group of seven subjects tested in greater detail (R.C.M. & G.Z.R., Eur. J. Neurosci. 12 Suppl. 11, 40.11, 2000; R.C.M. & G.Z.R., unpublished data). Hence, as expected from existing literature10– 13, coordinated eye–hand tracking conferred a significant advantage over independent but synchronous eye–hand control and over manual tracking alone.

Manual tracking performance recorded in the MR scanner was not significantly different from that recorded from seven subjects tested in detail in the laboratory (Fig. 2). RMS errors and mean lag rose with increasing temporal offset, whereas optimal performance was achieved for the scanned group when the target for eye motion anticipated that for hand motion by some 38 ms (estimated by second-order polynomial interpolation of the heavy curves in Fig. 2). Differences in RMS errors were not significant in this group, but mean lag did vary significantly with temporal offset (F4,40 = 2.86, p < 0.04, ANOVA).

For subjects tested in the laboratory, both error and mean lag varied highly significantly (F13,273 > 4.5, p < 0.0001, ANOVA), and minimum RMS error was estimated to be at an offset of 61 ms. These data support our view that the temporal asynchrony of target trajectories provided a parametric manipulation of eye–hand coordination, and also show that optimal performance was achieved when the ocular system slightly anticipated the manual system. Ocular tracking could not be recorded in the MR scanner and is not considered here in detail (R.C.M. & G.Z.R., unpublished data). However, we recorded eye position during these tasks in another group of six subjects, whose manual tracking performance confirmed the significant results listed above for both experiments 1 and 2. Eye tracking performance did not vary significantly with condition in either experiment (correlation to ocular target, correlation and lag, p > 0.05, ANOVA,

n = 6).

Examination of all 360 records confirmed that the subjects were able to follow the ocular target at almost all times. The total time spent in blinks, in brief (0.2–0.5 s) fixation of the manual target (for example, Fig. 1c, at 4 s) or in very occasional mistaken pursuit of the cursor ranged across subjects from 0.2–1.5% of the task duration. Coordinated versus independent eye–hand control Contrasting ocular tracking versus fixation activated areas in extrastriate visual cortex, parietal cortex and cerebellar vermis, consistent with ocular tracking of a moving target (Table 1a)14–16.

The cortical eye fields may not be activated because the baseline task was ocular fixation. Contrasting manual tracking (‘hand only,’ Table 1b) versus rest activated the areas expected 16–18 to be involved in control of right hand motion within contralateral sensory-motor and premotor areas, and bilateral areas in basal ganglia and ipsilateral cerebellum.

Interaction terms of the factorial analysis (see Methods) exposed regions significantly more active in the coordinated condition than expected by summation of their activity in ocular and hand-only conditions (that is (coordinated + rest) – (hand only + eye only)), with activation of precuneus, extrastriate visual cortex, and weaker activation of prefrontal and ventral premotor regions (Table 2a). Bilateral lateral cerebellum was also activated, replicating an earlier fMRI tracking experiment8.

Directly contrasting activity during coordinated and independent conditions again resulted in significant bilateral activation of precuneus, right ventral premotor cortex, left superior and inferior parietal cortex, and right basal ganglia (Table 2b); however, this contrast did not activate cerebellum. Conversely, contrast of independent against coordinated tracking significantly activated only the medial cerebellum with peaks within the cerebellar nuclei (Table 2c).

Parametric variation of eye–hand coordination There were highly significant performance differences between the coordinated and independent conditions a b c d e f Fig. 1. Tracking task. (a) Subjects tracked a displayed moving circle (blue trajectory) with eye movement. They also used a joystick to correct for target motion of a square cursor away from a central crosshair. Red, on-screen cursor trajectory. Accurate tracking in the coordinated condition led to movement of the joystick along the same trajectory as the eyes. (b–e) Four factorial conditions: hand only (b), eye only (c), coordinated (d) and independent (e). For clarity, only horizontal components of the trajectories are shown. Target trajectories for the hand (dark red) and for the eye (yellow) are the smooth lines; the trajectories of the joystick (orange) and the eye (green) are more jerky. (f) An example of the –304 ms temporal offset condition of experiment 2, with manual target trajectory leading the ocular trajectory.

Effect of eye–hand temporal offset on manual tracking performance.

Positive offset values indicate that the target for eye movement anticipated that for hand movement; negative values indicate the reverse. (a) Mean RMS errors normalized to the synchronous condition. Heavy line, mean performance data (± 1 s.e.m.) from the MR scanning sessions of experiment 2 (n = 9). Fine lines, mean data from another 7 subjects tested at target speeds 35% slower (triangle), same (circle) or 35% faster (square) than used in the fMRI experiment; independent eye–hand data is at the far right. (b) Mean tracking lag, estimated from cross-correlation of joystick and manual target trajectories. Fig. 3. Parametric analysis of BOLD related to eye–hand temporal offset.

Significant activity in experiment 2 was constrained entirely to the cerebellum (cluster, p = 0.0006, random effects group analysis, n = 9). Axial slices are at 5 mm separation from –46 mm relative to the AC-PC line48. The right brain is on the left of each image of experiment one, whereas the enforced eye–hand asynchrony in experiment two introduced smaller, graded performance changes within the same general tracking task. We therefore tested for BOLD activation in the second experiment fitted by quadratic regression to the temporal offset parameter.

A positive relationship would expose areas that co-varied with the U-shaped RMS error and tracking lag performance curves (Fig. 2). No areas were found to be activated at normal statistical significance levels for this positive model (p > 0.01, fixed-effects group analysis).

Conversely, a negative relationship would expose areas that were maximally active when performance was good and less active when performance was degraded by the eye–hand asynchrony. This model resulted in a large activation pattern within the cerebellum, statistically significant with both fixed-effects and the more conservative random- effects group analyses.

Peak activation loci were in the bilateral dentate nuclei, in the adjacent cerebellar cortex. No significant activity was seen in any other brain area.

The relationship between performance and BOLD The factorial design of experiment 1 demonstrated that the cerebellum was more activated during the independent condition, when performance errors were high, than in the coordinated condition. The parametric design of experiment 2 showed that it was more activated during the coordinated condition, when performance errors were low, than in the temporal offset conditions. These results imply a non-monotonic relationship between performance error and BOLD signal within the cerebellum.

We tested this prediction with additional combined experiments in which subjects were tested in the same session with the coordinated condition (zero offset), different temporal offset conditions, and the independent condition used in experiment 1. BOLD signal was fitted by weighted regression to these task parameters as before. In experiment 3, our regression model assumed the same inverted-U function as used in experiment 2, combined with a high positive weighting for the independent condition (see Methods). Significant bilateral cerebellar activity was found with clear overlap in activation patterns between experiment 2 and 3. In experiment 4, we tested for a dip in BOLD signal within the monotonically increasing performance curves. The signal from the cerebellum was significantly fitted by the quadratic U-shaped model. No areas were significantly activated by an inverted-U model.

As predicted, both contrasts of the coordinated (zero offset) and the independent condition with the –304 ms condition resulted in significant cerebellar activation. There was about 50% overlap of the activation patterns within the cerebellum, in crus I and lobules VII and VIII.

DISCUSSION

We show that the cerebellum was significantly activated in coordinated eye–hand tracking compared to isolated eye and hand movements, and that the cerebellum and only the cerebellum varied its activity in parametric relationship with the temporal offset used to vary eye–hand coordination. We confirmed these results in additional combined experiments, exposing a non-monotonic relationship between tracking error and cerebellar BOLD signal.

Functional imaging cannot be used to claim causal relationships. However, the improvement in tracking performance that we observed when eye–hand tracking was nearly synchronous must result from involvement of an active coordinating process, because coordinated eye–hand tracking was better than movement of the hand alone. Hence, these results argue strongly that cerebellar activity underlies the good performance in the eye–hand coordinated conditions of our task.

The lack of cerebellar activity in the direct contrast of coordinated versus independent conditions in the first factorial experiment was initially puzzling. Confirmation of this result in two combined parametric experiments showed that the cerebellum was more heavily activated in the independent task, during correction of and learning from the significant movement errors. Significant activity was indeed found in the contrast between the independent condition—when tracking errors were 20% higher—and coordinated condition. Similar activity was seen in the contrast of the independent condition with the –304 ms condition. This activation might also be related to factors other than movement error, as the independent task probably required higher attentional levels and involved more independent stimulus–response mapping, but these were not independently manipulated here.

However, cerebellar activity was not simply related to conditions with high movement errors. We found significant and robust cerebellar activation in the coordinated condition of each parametric experiment, exposing a positive relationship between improved performance and BOLD signal. Thus, large tracking errors seem to induce measurable activity within the cerebellum that can hide smaller activity changes related to coordination.

In motor learning tasks, high cerebellar activity related to performance errors is typically seen early on. Weaker cerebellar activation sustained after the motor learning is also seen. The activity patterns we saw are consistent with this. It is also striking that the precuneus was significantly more active in the coordinated condition than in either the independent eye–hand condition. The precuneus has not been reported to be involved in coordination but is active in visual imagery, visual–spatial processing and navigation, and attentive tracking.Our coordinated tracking task gives the subject opportunities to visualize the required manual trajectories from visual cues and from extraretinal signals. Thus, activation of the precuneus.

Combined independent and temporal offset conditions.

Significant activity is shown that was modeled by both independent tracking and eye–hand temporal offset parameters. Motor coordination depends on predictive information about movement. Synchronous movement of two effectors cannot be achieved simply by reaction to reafferent proprioceptive or visual inputs. In our factorial experiment, coordinated tracking was more accurate than tracking with hand movement alone. Hence, a predictive ‘forward model’ estimate of the movement outcome based on motor commands being sent to one effector is probably used to program or modify the movement of the other effectors. We believe that the experiments reveal the activation of these internal models, exposed by their sensitivity to the eye–hand temporal asynchrony.

Finally, we address a question of functional imaging interpretation common to motor coordination and to learning. If the cerebellum is the neural center for coordination, we might expect it to try to coordinate eye–hand movements under all circumstances, even if it fails in some. Why, then, do neural responses and BOLD signals change across our conditions Recent theory suggests that the cerebellar learning process may include active selection of control modules based on the goodness of fit of their forward modeling of current sensory– motor conditions. We suppose that the active models contribute to the BOLD signal in the coordinated condition. However, in the unusual circumstance when eye–hand asynchrony was enforced, modules that normally predict synchronous eye–hand relationships would not be selected, and the BOLD signal would be low. New control modules more appropriate for these temporal asynchronies would not yet be learned within the brief and randomly presented trials at each condition.

Thus, as demonstrated, the cerebellum would be relatively inactive. In conclusion, previous imaging studies using parametric variations of movement parameters have shown responses distributed throughout the motor system. Our study has exposed activity constrained to the cerebellum and is thus powerful support for its role, suggested from physiology and theory, in the coordination of eye and hand movements.

METHODS

Subjects. Nine right-handed subjects (4 male, 5 female, 21–55 years old) performed two tracking experiments in a single scanning session, after pre-training in the laboratory. Another group of seven (3 female, 18–21 years old) performed experiment 3. Three of the original 9 subjects performed experiment 4, one year after the first two. Subjects gave signed informed consent, and experiments were approved by the Central Oxford Research Ethics Committee. Tracking protocol. Subjects lay supine in the MR magnet and used prismatic glasses to view a rear projection screen placed 2.8 m from their eyes. The 13° °¡ 10° display was generated by a PC computer, projecting at VGA resolution (640 °¡ 480) with an LCD projector. Vision was uncorrected but all subjects could see the screen display without difficulty. Subjects held a lightweight, custom-made joystick in their right hands; movement of each joystick in two dimensions was encoded by rotation of polarized disks, detected by fiber optics, converted to voltage signals and sampled at 26 Hz. A square green cursor (0.2° °¡ 0.2°) was controlled by the joystick; subjects were instructed to center this cursor on a large stationary cross hair. In manual tracking conditions—that is, using the hand-held joystick—the cursor was actively displaced from the cross hair following a target waveform, and the task was to compensate for this motion (‘compensatory tracking’45). Movement of the tip of the joystick of approximately 6 cm (75° of joystick motion) was required, using thumb, finger and wrist movements. The target for all ocular tracking movements was a white circle, 0.2° in diameter. Eye movement was not recorded in the MR environment but was recorded monocularly in six subjects performing experiments 1 and 2 using an ASL 501 two-dimensional infrared reflectometry eye tracker outside the scanner (R.C.M. & G.Z.R., unpublished data). Accurate tracking of the ocular target required eye movement of up to 10° horizontally, 7.5° vertically and maximum speeds of approximately 6° per second. Manual tracking performance was quantified by calculating RMS error between the cursor position and the cross hairs, and by cross correlation of joystick and manual target trajectories. RMS error varied from trial to trial because of the difference in mean target speed (randomized target trajectories); hence, all RMS errors were normalized by mean target speed per trial22. Peak cross-correlation coefficients were high (mean r2 per subject per condition > 0.98) and thus an insensitive performance measure; only correlation lag and normalized RMS errors are reported. Imaging. Echo-planar imaging (TE, 30 ms; flip angle, 90 degrees) was done with a 3 T Seimens-Varian scanner, with whole brain images in the axial plane. Field of view was 256 °¡ 256 mm (64 °¡ 64 voxels), and 21 slices (7 mm) were acquired at a TR of 3 s. For experiment 1, 212 volumes were acquired (10 min 22 s); 290 volumes were acquired for experiment 2 (14 min 30 s). Finally, a T1-weighted structural image was taken, 256 °¡ 256 °¡ 21 voxels. For experiments 3 and 4, 426 or 402 volumes were acquired (21.5 or 20 min) with 25 slices of 5.5 mm at a TR of 3 s. Image analysis. EPI images were motion corrected to the tenth volume of each series using AIR46. They were then spatially filtered, and transformed to a common space by registration of the EPI image to the structural image with a 6° freedom linear transform and of the structural image to the MNI-305 average brain with a 12° freedom affine transform.

Analysis was carried out using FEAT, the FMRIB FSL extension of MEDx (Sensor Systems, Sterling, Virginia). Data were analyzed using general linear modeling within the FSL libraries with local autocorrelation correction. Statistical parametric maps were combined across subjects with fixed-effect models; random-effect modeling was used for experiment two. Regions of significant activation in the group Z (Gaussianized T) statistic images were found by thresholding at Z = 2.3 and then detecting significant clusters based on Gaussian field theory at a probability level of p < 0.01 (ref. 47). Significant clusters were rendered as color images onto the MNI-305 standard image. Locations of cluster maxima and local maxima are reported; identification of maxima was examined in the median images of the subjects’ brains by reference to published atlases.

References

Flourens, P. The Human Brain and Spinal Cord (eds. Clarke, E. & O’Malley,

C. D.) 657–661 (Univ. California Press, Berkeley, 1968).

Bastian, A. J., Martin, T. A., Keating, J. G. & Thach, W. T. Cerebellar ataxia:

abnormal control of interaction torques across multiple joints.

J. Neurophysiol. 76, 492–509 (1996).

Muller, F. & Dichgans, J. Dyscoordination of pinch and lift forces during

grasp in patients with cerebellar lesions. Exp. Brain Res. 101, 485–492 (1994).

Serrien, D. J. & Wiesendanger, M. Temporal control of a bimanual task in

patients with cerebellar dysfunction. Neuropsychologia 38, 558–565 (2000).

Van Donkelaar, P. & Lee, R. G. Interactions between the eye and hand motor

systems: disruptions due to cerebellar dysfunction. J. Neurophysiol. 72,

1674–1685 (1994).

6. Vercher, J. L. & Gauthier, G. M. Cerebellar involvement in the coordination

control of the oculo-manual tracking system: effects of cerebellar dentate

nucleus lesion. Exp. Brain Res. 73, 155–166 (1988).

Miall, R. C. The cerebellum, predictive control and motor coordination.

Novartis Found. Symp. 218, 272–290 (1998).

Miall, R. C., Imamizu, H. & Miyauchi, S. Activation of the cerebellum in

coordinated eye and hand tracking movements: an fMRI study. Exp. Brain

Res. 135, 22–33 (2000).

Marple-Horvat, D. E., Criado, J. M. & Armstrong, D. M. Neuronal activity in

the lateral cerebellum of the cat related to visual stimuli at rest, visually

guided step modification, and saccadic eye movements. J. Physiol. (Lond.)

506, 489–514 (1998).

Abrams, R. A., Meyer, D. E. & Kornblum, S. Eye-hand coordination:

oculomotor control in rapid aimed limb movements. J. Exp. Psychol. 16,

248–267 (1990).

Koken, P. W. & Erkelens, C. J. Influences of hand movements on eye

movements in tracking tasks in man. Exp. Brain Res. 88, 657–664 (1992).

Vercher, J. L., Magenes, G., Prablanc, C. & Gauthier, G. M. Eye–head–hand

coordination in pointing at visual targets: spatial and temporal analysis. Exp.

Brain Res. 99, 507–523 (1994).

Biguer, B., Prablanc, C. & Jeannerod, M. The contribution of coordinated eye

and head movements in hand pointing accuracy. Exp. Brain Res. 55, 462–469

(1984).

Petit, L. & Haxby, J. V. Functional anatomy of pursuit eye movements in

humans as revealed by fMRI. J. Neurophysiol. 82, 463–471 (1999).

Berman, R. A. et al. Cortical networks subserving pursuit and saccadic eye

movements in humans: an FMRI study. Hum. Brain Mapp. 8, 209–225

(1999).

Ni****ani, N. Cortical visuomotor integration during eye pursuit and eyefinger

pursuit. J. Neurosci. 19, 2647–2657 (1999).

Grafton, S. T., Mazziotta, J. C., Woods, R. P. & Phelps, M. E. Human

functional anatomy of visually guided finger movements. Brain 115, 565–587

(1992).

Jueptner, M., Jenkins, I. H., Brooks, D. J., Frackowiak, R. S. J. & Passingham,

R. E. The sensory guidance of movement: a comparison of the cerebellum

and basal ganglia. Exp. Brain Res. 112, 462–474 (1996).

Turner, R. S., Grafton, S. T., Votaw, J. R., DeLong, M. R. & Hoffman, J. M.

Motor subcircuits mediating the control of movement velocity: a PET study.

J. Neurophysiol. 80, 2162–2176 (1998).

Pelisson, D., Prablanc, C., Goodale, M. A. & Jeannerod, M. Visual control of

reaching movements without vision of the limb. II. Evidence of fast

unconscious processes correcting the trajectory of the hand to the final

position of a double-step stimulus. Exp. Brain Res. 62, 303–311 (1986).

Lewis, R. F., Gaymard, B. M. & Tamargo, R. J. Efference copy provides the eye

position information required for visually guided reaching. J. Neurophysiol.

80, 1605–1608 (1998).

Miall, R. C., Weir, D. J. & Stein, J. F. Visuomotor tracking with delayed visual

feedback. Neuroscience 16, 511–520 (1985).

Vercher, J. L. & Gauthier, G. M. Oculo-manual coordination control: ocular

and manual tracking of visual targets with delayed visual feedback of the

hand motion. Exp. Brain Res. 90, 599–609 (1992).

Wolpert, D. M. Multiple paired forward and inverse models for motor

control. Neural Net. 11, 1317–1329 (1998).

Waldvogel, D., van Gelderen, P., Ishii, K. & Hallett, M. The effect of

movement amplitude on activation in functional magnetic resonance

imaging studies. J. Cereb. Blood Flow Metab. 19, 1209–1212 (1999).

Vanmeter, J. W. et al. Parametric analysis of functional neuroimages–

application to a variable-rate motor task. Neuroimage 2, 273–283 (1995).

Jenkins, I. H., Passingham, R. E. & Brooks, D. J. The effect of movement

frequency on cerebral activation: a positron emission tomography study.

J. Neurol. Sci. 151, 195–205 (1997).

Winstein, C. J., Grafton, S. T. & Pohl, P. S. Motor task difficulty and brain

activity: Investigation of goal-directed reciprocal aiming using positron

emission tomography. J. Neurophysiol. 77, 1581–1594 (1997).

Poulton, E. C. Tracking Skill and Manual Control (Academic, New York,

1974).

Woods, R. P., Grafton, S. T., Holmes, C. J., Cherry, S. R. & Mazziota, J. C.

Automated image registration: I. General methods and intrasubject,

intramodality validation. J. Comput. Assist. Tomogr. 22, 141–154 (1998).

Friston, K. J., Worsley, K. J., Frackowiak, R. S. J., Mazziota, J. C. & Evans, A. C.

Assessing the significance of focal activations using their spatial extent. Hum.

Brain Mapp. 1, 214–220 (1994).

Talairach, J. & Tournoux, P. Co-Planar Stereotaxic Atlas of the Human Brain

(Thieme, Stuttgart, 1988).

49. Schmahmann, J. D. et al. Three-dimensional MRI atlas of the human

cerebellum in proportional stereotaxic space. Neuroimage 10, 233–260

(1999).

Jenkins, I. H., Brooks, D. J., Nixon, P. D., Frackowiak, R. S. J. & Passingham,

R. E. Motor sequence learning: a study with positron emission tomography.

J. Neurosci. 14, 3775–3790 (1994).

Clower, D. M. et al. Role of posterior parietal cortex in the recalibration of

visually guided reaching. Nature 383, 618–621 (1996).

Imamizu, H. et al. Human cerebellar activity reflecting an acquired internal

model of a new tool. Nature 403, 192–195 (2000).

Flament, D., Ellermann, J. M., Kim, S. G., Ugurbil, K. & Ebner, T. J.

Functional magnetic resonance imaging of cerebellar activation during the

learning of a visuomotor dissociation task. Hum. Brain Mapp. 4, 210–226

(1996).

Allen, G., Buxton, R. B., Wong, E. C., Courchesne, E. Attentional activation of

the cerebellum independent of motor involvement. Science 275, 1940–1943

(1997).

Ivry, R. Cerebellar timing systems. Int. Rev. Neurobiol. 41, 555–573 (1997).

Shadmehr, R. & Holcomb, H. H. Neural correlates of motor memory

consolidation. Science 277, 821–825 (1997).

Roland, P. E. & Gulyas, B. Visual memory, visual imagery, and visual

recognition of large field patterns by the human brain: functional anatomy by

positron emission tomography. Cereb. Cortex 5, 79–93 (1995).

Fletcher, P. C., Shallice, T., Frith, C. D., Frackowiak, R. S. & Dolan, R. J. Brain

activity during memory retrieval. The influence of imagery and semantic

cueing. Brain 119, 1587–1596 (1996).

Ghaem, O. et al. Mental navigation along memorized routes activates the

hippocampus, precuneus, and insula. Neuroreport 8, 739–744 (1997).

Tamada, T., Miyauchi, S., Imamizu, H., Yoshioka, T. & Kawato, M. Cerebrocerebellar

functional connectivity revealed by the laterality index in tool-use

learning. Neuroreport 10, 325–331 (1999).

Van Donkelaar, P., Lee, R. G. & Gellman, R. S. The contribution of retinal and

extraretinal signals to manual tracking movements. Exp. Brain Res. 99,

155–163 (1994).

Ferraina, S. et al. Visual control of hand-reaching movement: activity in

parietal area 7m. Eur. J. Neurosci. 9, 1090–1095 (1997).

Vercher, J. L. et al. Self-moved target eye tracking in control and deafferented

subjects: roles of arm motor command and proprioception in arm–eye

coordination. J. Neurophysiol. 76,1133–1144 (1996).

Witney, A. G., Goodbody, S. J. & Wolpert, D. M. Predictive motor learning of

temporal delays. J. Neurophysiol. 82, 2039–2048 (1999).

Miall, R. C. & Wolpert, D. M. Forward models for physiological motor